Your face says sad.

Your words say fine.

This AI catches the difference.

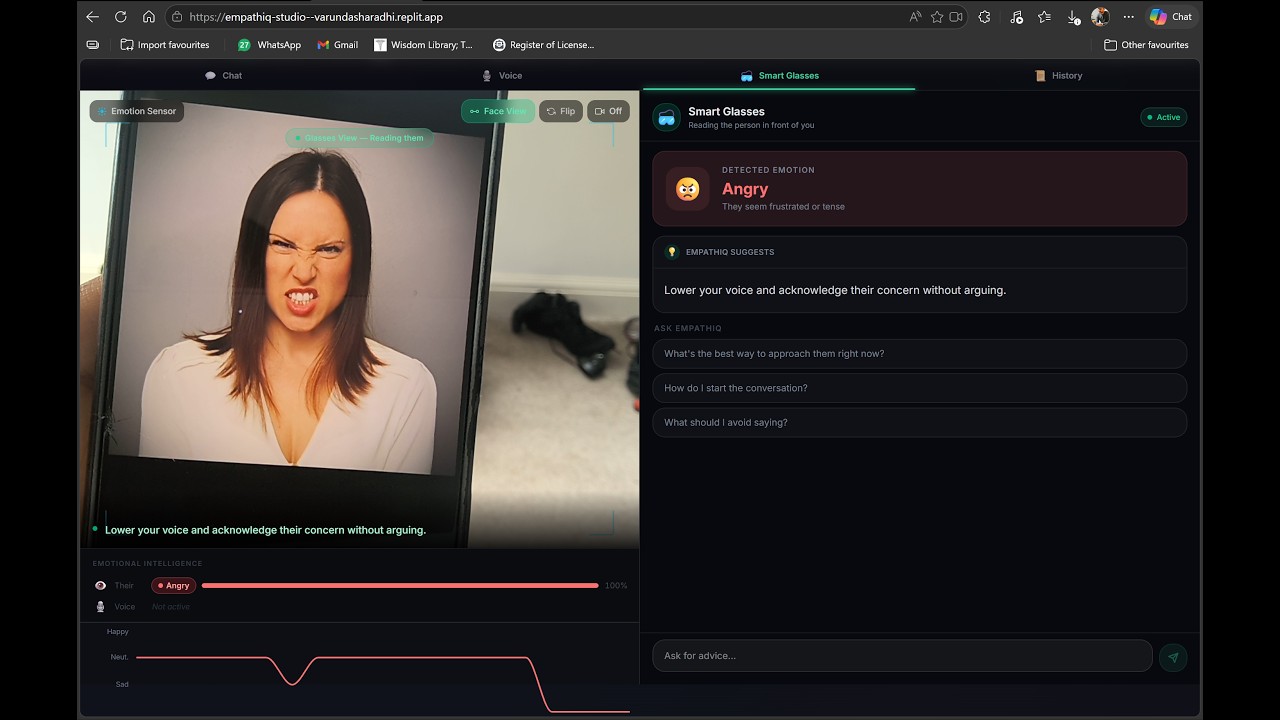

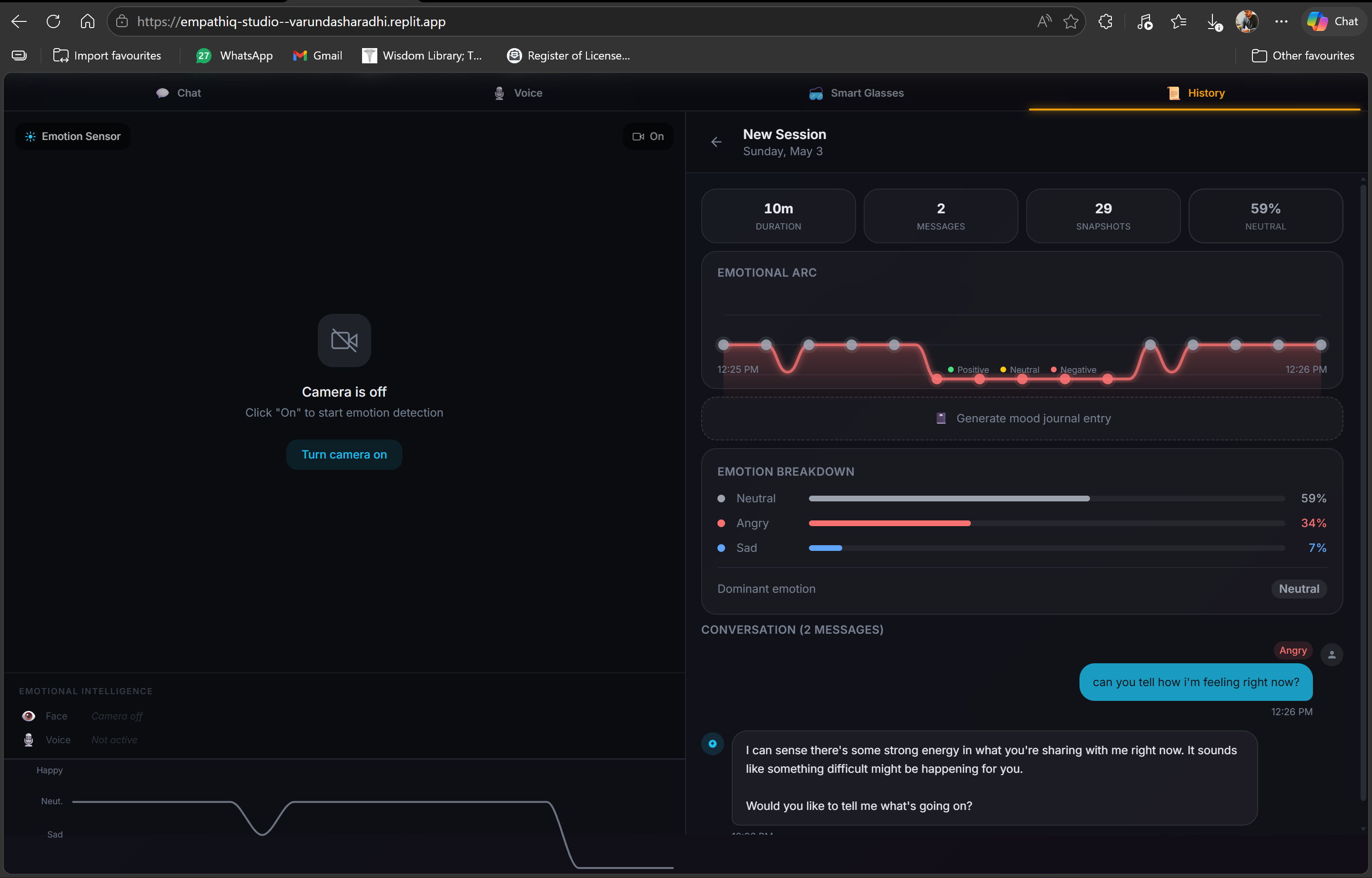

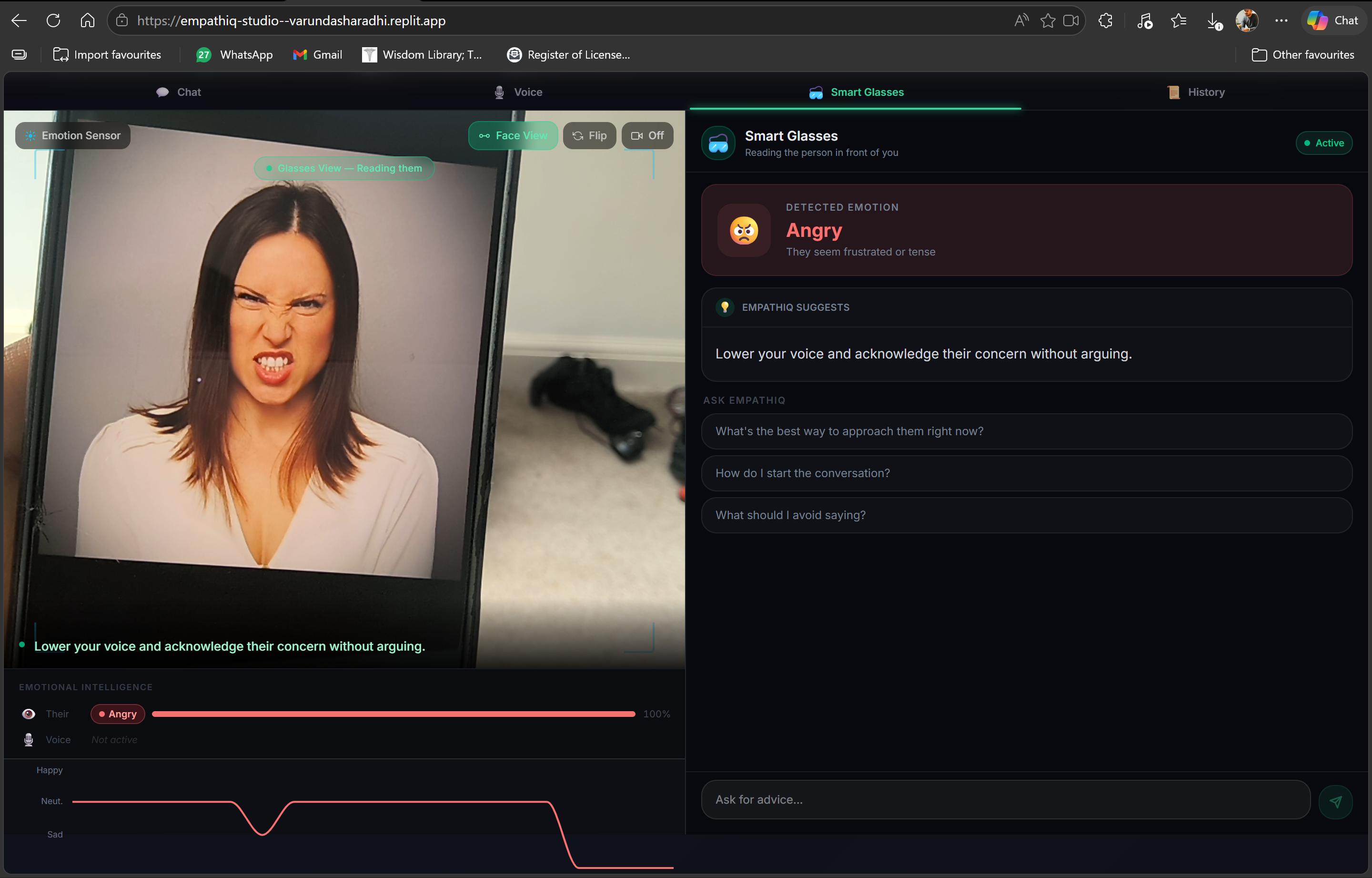

EmpathIQ reads your face, your voice, and your words simultaneously, and responds to how you actually feel, not just what you say.

Webcam, microphone, and chat: three inputs, one emotionally intelligent AI.

Smart Glasses detecting anger in real time and coaching you how to respond.

"What a beautiful idea!" — Gyuree, Replit community

50+ demo views in 48 hours

Built in 24 hours. Continuously improving. Like any new relationship, EmpathIQ gets better at understanding you the more you talk.